Bridging the Gaps: Data Consistency

Accio Analytics

< 1 min read

Data consistency sounds like a technical requirement. In practice, it is a business risk.

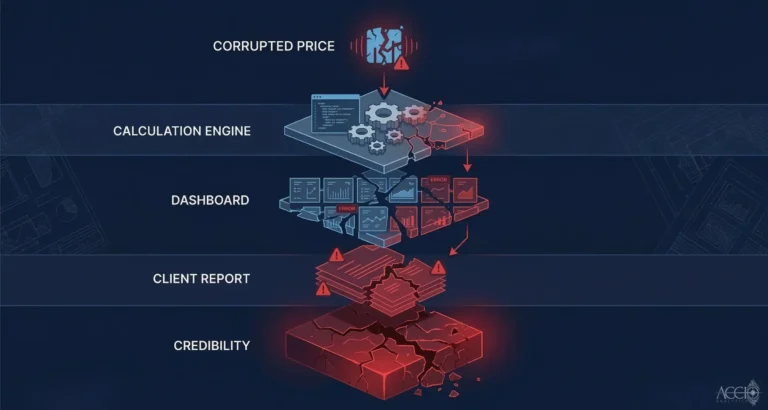

When two systems in the same firm show different values for the same instrument on the same day, you do not have an analytics problem. You have a consistency problem. The two systems ingested the same underlying data through different pipelines, processed it with different assumptions, and produced different results. Both think they are correct. Neither can be trusted.

This is the consistency gap that most financial institutions quietly tolerate. The workaround is manual reconciliation. Someone, usually an analyst, spends time comparing outputs from different systems to determine which one to use for a specific purpose. That time is the Manual Tax: the hidden labor cost of operating on inconsistent data.

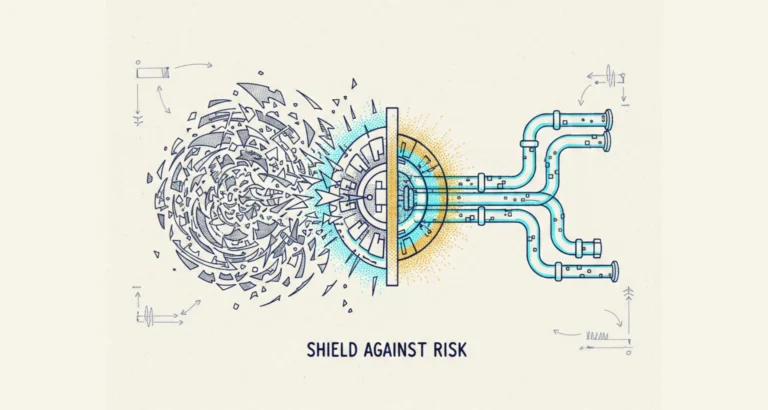

Data consistency requires normalization at the source. Before data from different vendors enters different systems, it needs to be translated into a single, consistent schema. Same field names. Same units. Same reference data. When every downstream system draws from the same normalized source, the consistency problem dissolves.

The Accio Ingestion Engine provides this normalization layer. It ingests from multiple vendor feeds, normalizes all inputs to a consistent schema, and distributes clean, consistent data to consuming systems. One source of truth. No reconciliation required.

By Sean Mentore, Co-Founder & Chief Architect, Accio Analytics

Technical Whiteboard Session

Sean is offering to sit down with your lead architect and head of operations for a 30-minute technical whiteboard session where we will:

- Map your current ETL flow for data ingestion

- Identify the specific points where your system leaks capital

- Identify where your data lineage fails the Proof of Origin test

Related reading:

Additional Insights

View All Insights