Bad data in a financial institution is not a technical nuisance. It is an operational liability.

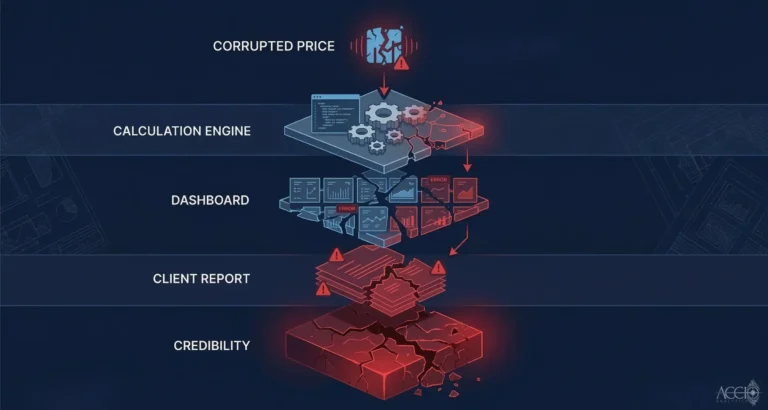

Most data quality conversations in financial services focus on the output side. The report has wrong numbers. The dashboard shows an anomaly. The client flags a discrepancy. By the time those problems surface, the underlying data quality issue has already been in the system for hours or days. Every calculation that ran during that window produced results built on a false foundation.

The risk of poor data quality compounds at every layer of your infrastructure. The calculation engine trusts the data it receives. The risk model runs on what the calculation engine produces. The client report reflects what the risk model outputs. A single upstream data quality failure does not create one problem. It creates a cascade.

I have watched this play out at financial institutions that had sophisticated systems downstream and no systematic quality control upstream. The analytics were powerful. The data entering those analytics was unmanaged. The result was a technically excellent system producing results no one could fully trust.

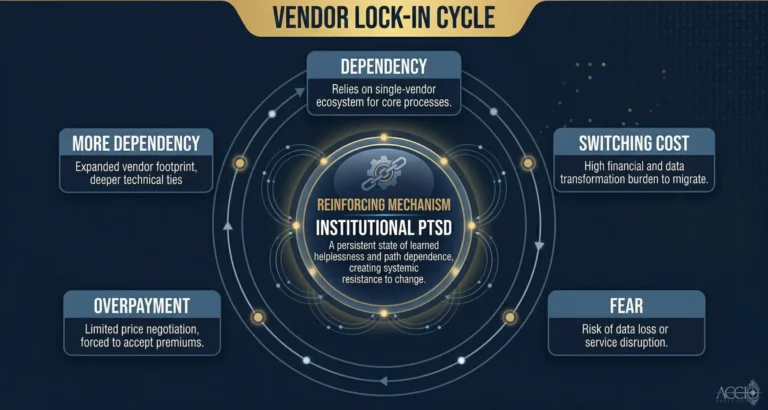

The specific data quality issues that create the most operational risk are not exotic edge cases. They are structural. Vendor feeds that deliver the same instrument priced differently on the same day. Schema changes introduced by a data provider without notice. Null values where a calculation expects a number. Timestamps that do not align across feeds. These are the everyday data quality failures that most institutions handle through manual reconciliation rather than systematic prevention.

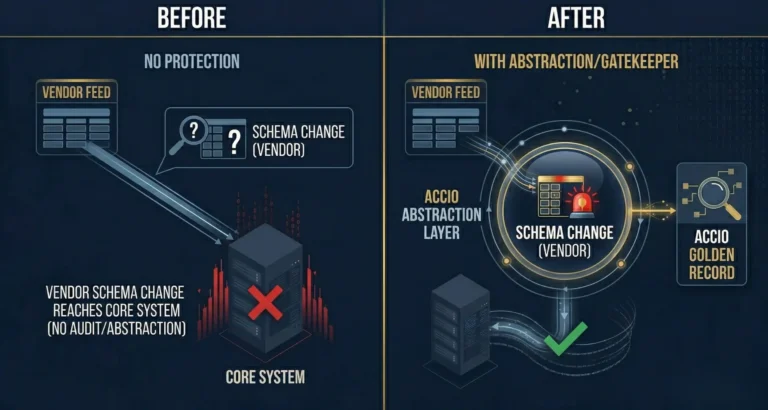

Prevention happens at ingestion. Before data from a vendor feed reaches your core systems, it needs to be validated against expected schemas, normalized to a consistent format, and checked for anomalies. That is what systematic data quality control looks like at the infrastructure level. Not a report that identifies problems after the fact. A gate that catches them before they propagate.

The Accio Ingestion Engine is built around this principle. Every incoming record is validated. Every transformation is logged. Every anomaly is flagged before it reaches your downstream systems. You get full traceability. Every output can be traced back to the raw input it came from. The Glass Box approach to data quality is not a feature. It is the architecture.

By Sean Mentore, Co-Founder & Chief Architect, Accio Analytics

Technical Whiteboard Session

Sean is offering to sit down with your lead architect and head of operations for a 30-minute technical whiteboard session where we will:

- Map your current ETL flow for data ingestion

- Identify the specific points where your system leaks capital

- Identify where your data lineage fails the Proof of Origin test

Related reading

Additional Insights

View All Insights